What is the Proxmark3?

The Proxmark3 is fascinating, it has the ability to read and write a wide range of RFID cards, both low-frequency (typically things like door access) and high-frequency (more advanced cards, transit, credit cards, etc). It can also be frustrating, largely due to:

- Inconsistent support across OSes

- Unclear documentation about identifying RFID cards you find

Mac OS X Mavericks + Proxmark3 == sadpanda

TL;DR: don’t try to work with proxmark3 in OS X, it has just enough support to keep you trying, without enough to actually help you excel

There are no official binaries, and no unofficial binaries for OS X so that means we must to go the source.

To compile make sure you have what is in the COMPILING.txt, some of these are more obvious than others.

For example, libreadline:

brew install readline |

This however will not link because some conflicting library is installed with XCode. You want to hijack that, use

brew link readline --force |

The ARM compiler is easy to install (according to this thread) via github.com/jsnyder/arm-eabi-toolchain. That thread also advises you to use

brew install libusb libusb-compat --universal |

for libusb.

Once you’re pretty confident you have most of these dependencies installed, you can attempt to make the project. If it works, good for you. If not, you’ll spend a bunch of time googling. You will almost certainly have to modify the CXXFLAGS, and QTLDLIBS in client/Makefile. There are a few recommendations as to what these should, it’s unclear which of these which is best.

Hopefully, at this point, you can compile the application. it is run from the client directory via

./proxmark3 /dev/ttysXXX.usbXXXX |

Unfortunately, if you’re like me, you won’t have any such devices.

To further compound matters, there is a decent chance that your proxmark3 is actually running old firmware and needs to be updated. There was a pretty big shift in the firmware, it used to use libusb but now registers itself as a COM port. I think these were for performance related; but the result is that older firmware can’t use newer software and vice versa. Flashing the old to new requires a little bit of a hybrid approach combined with witch magic.

It’s all about the Kext’s

There is also another issue looming that you might not realize. OS X is going to hijack your usb connection and not let you use it. The proxmark3 app will complain about not being able to claim the device.

Allegedly, you can use what is called a “codeless kext” to force OS X to ignore a device. That is what is hoped to be achieved with make install_kext. This didn’t seem to work for me. I tried poking around for a bit, Apple has this concept of “VendorSpecificDriver” that is meant to allow you to disable OS X from claiming the device before you can. kextutil will become your friend to get this debugged as you attempt to combine this “VendorSpecificDriver” code with the kext created by the Makefile, in a sweet Frankenstein attempt. As far as I can see, this approach no longer seems to work.

In researching this kext, you may see a bunch of things about requiring signing for codeless kexts. I don’t think this is true, in both this story and my own experimentation, using the /System/Library/Extensions/ folder, you are able to load an unsigned kext. However, I was unable to actually get the kext working with my Proxmark, so maybe there is something else I was missing. I also tried a documented alternative approach to unload the kext that Apple was using: kextunload -b com.apple.driver.AppleUSBCDC (You’ll notice CDC which is the “old” style of communication for Proxmark). No luck.

Stop paddling upstream

It was at this point that I decided I should reassess my approach. It turns out that in this thread, there are pre-compiled binaries for proxmark3 for windows. Awesome.

There are even instructions included about updating from the old firmware, which involves lots of holding the proxmark button down while interacting with the device from the computer. I had to flash the update to the new COM style bootloader and also the “bootrom”, “fullimage”, and “OS”, before the device would be probably recognized even with the new driver.

Installing the drivers was again a bit of a pain, but not too bad.

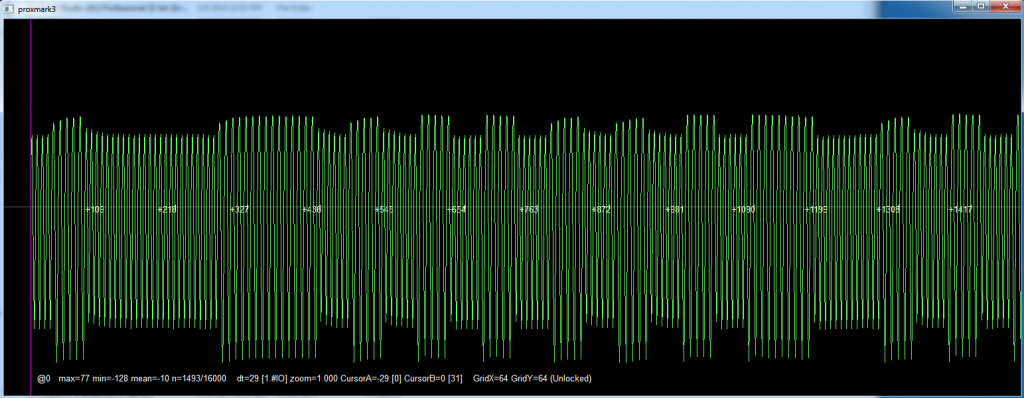

What does this signal mean?

Once the hardware is setup and you’re in action you’re likely to encounter another problem. How do I read this card and make any sense of it? Unfortunately, the answer doesn’t seem to be super simple. Some LF cards are labeled with things like “HID” or “Indala”, which tells you right away what to use. There also seem to be a lot of cards that qualify as “em4x”, particularly “em410”. There’s a good chance you’re trying to read one of these three, but if it’s not your best bet is to turn to the forums or look for any sort of labeling that can help you.

Here are a few of the commands I seemed to use most:

hw tune hw version hw tune lf read data samples 5000 lf hid fdskdemod |

Be ready for a bit of flakiness, you might have to restart your computer occasionally, or re-plug in the proxmark (frequently). Once it’s reading though, it seems to do it pretty consistently.

cloning?

Cloning cards is a whole new beast. It seems that many cards are not re-writable, or if they are you can’t use the standard cloning provided by the proxmark software. Again, this is a shame because you will see reference to “t55x7” cards, unfortunately it doesn’t seem possible to easily know what card type you have in your hand.

Conclusion

Generally, the Proxmark3 concept is great. I know how difficult it is to foster a good community that can work across the range of software and hardware necessary for a good Proxmark experience, so I applaud the effort. I hope the tools continue to improve.

I’d really like to see a bit more consistency around OS/driver support, and documentation to aid in identifying RFID cards. Hopefully, I can find time to figure some of this out and put in pull requests to the Proxmark3 repository and help the community.

Do you have experience with the Proxmark3? Does it match mine?