My goals for Mymail-Crypt for Gmail have always been to allow everyone to be able to use Gmail to simply, and privately send messages. I’ve recently had time to conquer one of the bigger challenges that I’ve only poked at before: stopping automatic draft saving.

However, the whole process was an exercise in backtracking, frustration, and bursts of satisfaction. This post is going to be very technical, and I write it in hopes that someone attempting to do something similar with Gmail or general extension hacking can find something useful. Feel free to reach out to me with any questions.

No, this is not how to read your ex-girlfriend’s email, and no, I don’t actually profit from this extension.

AJAX Attack!

Ok. Let’s make Gmail stop unloading drafts. These are sent via XHR (ajax), so let’s intercept that from our extension and stop them from uploading:

window.XMLHttpRequest.prototype.open = function(){

debugger;

} |

window.XMLHttpRequest.prototype.open = function(){

debugger;

}

Nothing. That’s weird. I see in the debugger, XHR calls going back and forth, but my debugger call isn’t registering.

Ok I wonder if this is a limitation of Content Script Chrome extensions. I’ve looked at the Content Script Documentation before but, let’s try some experiments:

$('body').append('<script type="text/javascript">

alert("here");

</script>'); |

$('body').append('<script type="text/javascript">

alert("here");

</script>');

Ok, I get an ever so pleasant alert popup on page load. JS DOM injection Works.

$('body').append('<script type="text/javascript">

window.XMLHttpRequest.prototype.send = function(){debugger;}

</script>'); |

$('body').append('<script type="text/javascript">

window.XMLHttpRequest.prototype.send = function(){debugger;}

</script>');

Doesn’t work. “Isolated Worlds” are in play. This is the limit of the content script sandbox. Time to take another approach.

Replace the composition box

So, if I can’t intercept the actual XHR messages, I think I’m going to have to change something on the page in order to prevent drafts from being visible. My first thought is to look at the composition form textbox. A quick look in the debugger shows that the code looks like this for the textbox:

<body g_editable="true" hidefocus="true" contenteditable="" class="editable LW-avf" id=":2ak" style="min-width:0;"><br></body> |

<body g_editable="true" hidefocus="true" contenteditable="" class="editable LW-avf" id=":2ak" style="min-width:0;"><br></body>

I try replacing the :2ak with my own personal id. However, even after I make this change, the draft still gets uploaded. I try changing a few parent id values. Same response.

Higher in the DOM I find the form that is being submitted. When I rename this id value I get an error. Yay. My Fuzzing broke the page. I use the debugger to pause on all XHR requests. I confirm that the XHR request contents are essentially blank, as I would expect. This is a good sign.

Now, how do I make the page usable again.

Click hijacking

The first thing I try is to write a new jQuery.click() on things that I think I could click on, notably a elements. However, Gmail doesn’t use a lot of a elements. I seemed to sometimes get into some sort of race condition depending on how I was binding .click() events. There seem to be two things at play here:

- jQuery by default will make a queue with event handlers, but this can be overridden

- (again) Content Script Chrome extensions have limitations on manipulating the host page javascript

Time to pivot, no, not pivot I’m not a startup change my strategy yet again.

ctrl+z

Let’s try copying our form, I can leave the cloned form blank so Gmail thinks there is an empty message.

form.parent().prepend(form.clone()); |

form.parent().prepend(form.clone());

Initially, this is looking pretty good. I can change the id values and hide the form I don’t want Gmail to see. However, it quickly becomes apparent there is a serious issue with this approach. The text box is not editable. That’s weird. Uggh, part of the form is an iframe. After wasting an embarrassing amount of time trying to force the iframe html value to be what I want, it becomes apparent my approach isn’t working and the content isn’t loaded when I try to clone it. Duh, what if we just wait until it is loaded.

setTimeout(function(){

var form = $('#canvas_frame').contents().find('[class="fN"] > form').first();

form.parent().prepend(form.clone());

}, 500); |

setTimeout(function(){

var form = $('#canvas_frame').contents().find('[class="fN"] > form').first();

form.parent().prepend(form.clone());

}, 500);

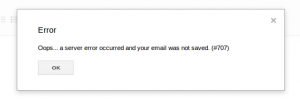

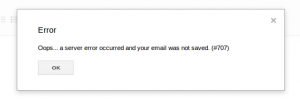

Alright, this is looking good. I can edit the text as I would expect. Uggh, new problem, I’m stuck in some sort of weird state where either the drafts are continuing to save or I’m hitting my new favorite Gmail 707 error. I proceed to fiddle with this, changing exactly how I insert or what I do, for quite some time. It continues to not work. Awesome.

Take a break (always undervalued).

ctrl+z

In fiddling with the cloning, I’m somehow slowly drawn back to my previous strategy of just renaming the composition form. Ok, so the major issue with this is that whenever I click any link I get a 707 error unless the form has the proper id. Let’s take a step back and see if somehow we can take advantage of the hierarchical structure of the DOM. What if I bind a mousedown on the main “canvas_frame” iframe object?

$('#canvas_frame').contents().mousedown(function(){

alert('I'm CLICKING');

}); |

$('#canvas_frame').contents().mousedown(function(){

alert('I'm CLICKING');

});

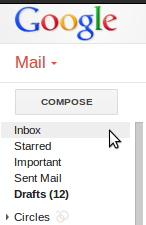

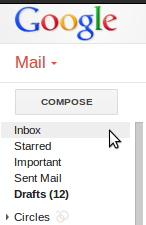

Boom. This works exactly as I want it to. Anywhere I click, I’m getting the alert. Now let’s try to refine this sledge-hammer approach to make it useful. I target the left hand side of the Gmail window:

Rather than a 707 error, I’m going to go ahead and assume you want to navigate away. If you don’t want drafts to autosave, navigating away means these should not be saved. So let’s rebind this left hand side

$('#canvas_frame').contents().find('[class="nH oy8Mbf nn aeN"]').mousedown(clearAndSave);

function clearAndSave(){

$('#canvas_frame').contents().find('#gCryptForm').find('iframe').contents().find('body').text('');

saveDraft();

}

function saveDraft(){

var form = $('#canvas_frame').contents().find('#gCryptForm');

form.attr('id', formId);

$('#canvas_frame').contents().find('div[class="dW E"] > :first-child > :nth-child(2)').click();

} |

$('#canvas_frame').contents().find('[class="nH oy8Mbf nn aeN"]').mousedown(clearAndSave);

function clearAndSave(){

$('#canvas_frame').contents().find('#gCryptForm').find('iframe').contents().find('body').text('');

saveDraft();

}

function saveDraft(){

var form = $('#canvas_frame').contents().find('#gCryptForm');

form.attr('id', formId);

$('#canvas_frame').contents().find('div[class="dW E"] > :first-child > :nth-child(2)').click();

}

This works well. I similarly blank out other navigation links. To save drafts, I bind the appropriate button to call the saveDraft function. To send a message I again save a draft and fake click the send button. jQuery provides the powerful ability to generically select the Send button:

$('#canvas_frame').contents().find('div[class="dW E"] > :contains("Send")'); |

$('#canvas_frame').contents().find('div[class="dW E"] > :contains("Send")');

General Approach

You may have notice that I search Gmail page based on class. In my experience (too many hours), these are less likely to change between iterations. I try to make my jQuery selections as generic as possible but still get the exact element I want — it’s often hard to find the appropriate balance.

There’s some other craftiness in my solution, but this article is already far longer than I had anticipated, and shows the points I consider most important.

Thoughts

It works! The Gmail interface is not intended to be manipulated by people. Figuring out how the complex functionality of the application is difficult and takes a lot of guess and check.

I’ve added an experimental option to disable draft autosaving in the latest version of Mymail-Crypt for Gmail (source), (Chrome Web Store extension) using the approach described here.